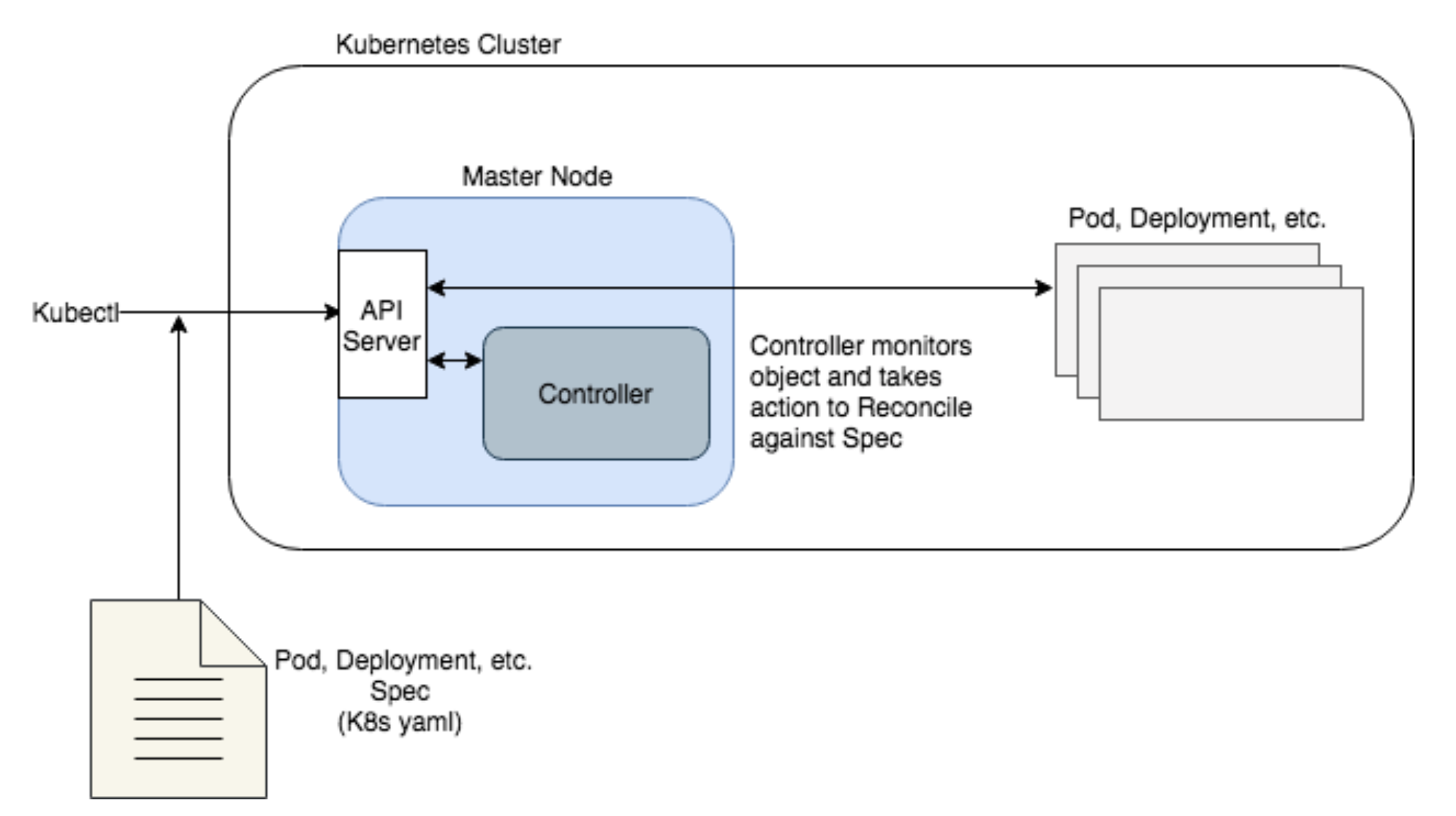

Airflow provides operators to create and interact with the EKS clusters and compute infrastructure. With NativeEnvironment, rendering a template produces a native Python type.You can use Apache Airflow DAG operators in any cloud provider, not only GKE.Īirflow-on-kubernetes-part-1-a-different-kind-of-operator as like as Airflow Kubernetes Operator articles provide basic examples how to use DAG's.Īlso Explore Airflow KubernetesExecutor on AWS and kops article provides good explanation, with an example on how to use airflow-dags and airflow-logs volume on AWS.Įxample: from airflow.operators. Kubernetes is an open-source system for automating the deployment, scaling, and management of containerized applications. When render_template_as_native_obj is set to True. datetime ( 2021, 1, 1, tz = "UTC" ), catchup = False, render_template_as_native_obj = True, ) ( task_id = "extract" ) def extract (): data_string = '.

The command below is tried and confirmed to be working and I am trying to replicate the same using the kubernetes pod operator locally.

These are just a few of the many operators available in Airflow. FileSensor: Waits for a file to be added to a specified directory. Example DAG using Kubernetes Operator hellopythondag.py. Apache Airflow 1.10.0 brings a lot of new functionalities such as timezone support, performance optimisation for large DAGs, Kubernetes Operator and. HdfsSensor: Waits for a file to be added to HDFS. This boilerplate provides an Airflow Cluster using Kubernetes Executor hosted in.

In this guide, you'll learn: The requirements for running the KubernetesPodOperator. I am trying to create and run a pod using Airflow kubernetes pod operator. Figure 1 shows graph view of a DAG named flightsearchdag which consists of three tasks, all of which are type of SparkSubmitOperator operator.tasks flightsearchwaiting. KubernetesPodOperator: Executes a task in a Kubernetes pod. As part of Bloomberg's continued commitment to developing the Kubernetes ecosystem, we are excited to announce the Kubernetes Airflow Operator a mechanism for Apache Airflow, a popular workflow orchestration framework to natively launch arbitrary Kubernetes Pods using the Kubernetes API. By abstracting calls to the Kubernetes API, the KubernetesPodOperator lets you start and run Pods from Airflow using DAG code. Apache Airflow provides a wide variety of operators that can be used to define tasks in a DAG. Dag = DAG ( dag_id = "example_template_as_python_object", schedule = None, start_date = pendulum. The KubernetesPodOperator (KPO) runs a Docker image in a dedicated Kubernetes Pod.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed